What's New

Temporal Deep Learning for Surgical Video Analytics

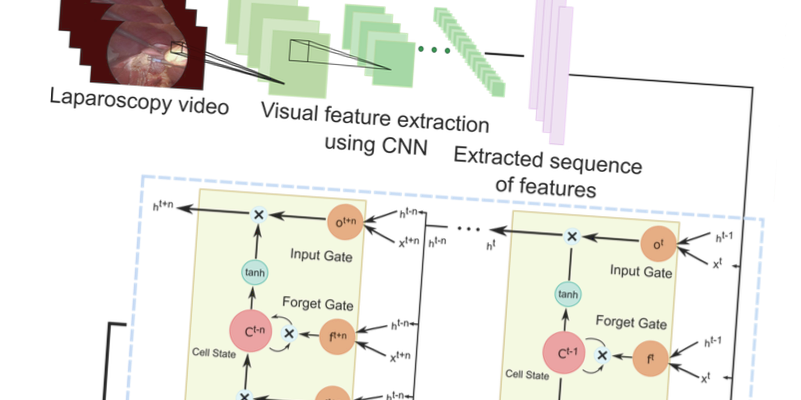

Surgical workflow in minimally invasive interventions like laparoscopy can be modeled with the aid of tool usage information. The video stream available during surgery primarily for viewing the surgical site using an endoscope can be leveraged for this purpose without the need for additional sensors or instruments. We propose a method which learns to detect the tool presence in laparoscopy videos by leveraging the temporal connectionist information in a systematically executed surgical procedures by learning the long and short order relationships between higher abstractions of the spatial visual features extracted from the surgical video. We propose a framework consisting of using Convolutional Neural Networks for extracting the visual features and Long Short Term Memory network to encode the temporal information.